Rest assured, a software code review isn’t the same as an official auditor going over your financial records. Auditors are looking for regulatory compliance, errors, omissions, etc. – basically searching for where you’ve either made mistakes or may have cheated. And most of the time, the financial auditor doesn’t care what the motive was; if there’s something wrong, you’re in trouble. This is what makes such events, in most people’s minds, undesirable, unpleasant, and to be avoided if at all possible.

A software code review is entirely different. It is designed not to punish but to reward.

Code reviews seek to find opportunities for improvement, to make applications run faster, more efficiently, more securely, and offer a better user experience. There is no downside to this type of review to cause apprehension and fear. And it’s a critical part of the Continuous Improvement process.

What’s axiomatic is that the older a code base, the more a thorough code review is needed. This is because, as the years go by, better techniques, tools, frameworks, platforms, and approaches come into existence. Therefore, older code, by definition, never had an opportunity to take advantage of these improvements.

In fact, as the technology itself continuously advances, entirely new capabilities emerge that are as distinct as web interfaces to Cloud-based applications are from PC workstation-resident applications talking to behemoth 20th century mainframes via analog modems over landlines. There have been many a project whose prime goal was to migrate decades-old system functionality into the 21st century. That work never seems to end.

But in a more general sense, there’s no such thing as 100% perfect code. At any point in history, including to this very day, most software applications were/are developed in an atmosphere of urgency to meet business deadlines. Releases are shoved out the door to meet a marketing and revenue goal for a particular fiscal period. Or, fixes and updates are slapped together into patches to assuage the anger of top customers ready to throw a vendor out and go with a competitor. It’s just real life.

Nevertheless, when the work is done with the urgency of a clock ticking and managers and supervisors demanding deadlines be met, often the quality suffers at the mercy of speed to market.

Thus, this becomes the impetus for the whole concept of “Spaghetti Code.”

“In computer programming, code which flagrantly violates the principles of structured, procedural programming. Usually this means using lots of GOTO statements (or their equivalent in whatever language is being used) – hence the term, which suggests the tangled and arbitrary nature of the program flow. Spaghetti code is almost impossible to debug and maintain, and rarely works well.”

~The Urban Dictionary

But even if code is written with greater structure and procedures, that doesn’t make it immune from common errors that often have unintended consequences, such as memory leaks or worse, inadvertent “back doors” that leave security holes that a hacker might discover and exploit.

Fundamentally, there are also just the inefficiencies associated with a lack of imagination or advanced training. That is, there is rarely only one way to write a module of code to accomplish a particular function. One developer might know how to do it in only a few lines, while another may take many, many more lines to get it done. They both do the same thing when the code executes, but one version takes up far less memory and runs much faster.

Then there is the whole idea of “Not being able to see the forest for the trees.” That is, software developers are human beings and, therefore, have egos and human pride. If they wrote something to the best of their ability, it’s natural for them to feel it should be good. Every time they look at it, it looks fine, just like they wrote it. And that means that their observation of their own work is neither objective nor unbiased.

Quality Control: You Never Save Money Avoiding It (Here is Why)

In a typical development environment, a developer will write a section/module of code and then unit test it to ensure it does what he/she intended it to do. When the developer is satisfied that their code is working, it then goes to a Quality Control (QC) person to independently test it. This is where many code inefficiencies slip through the cracks.

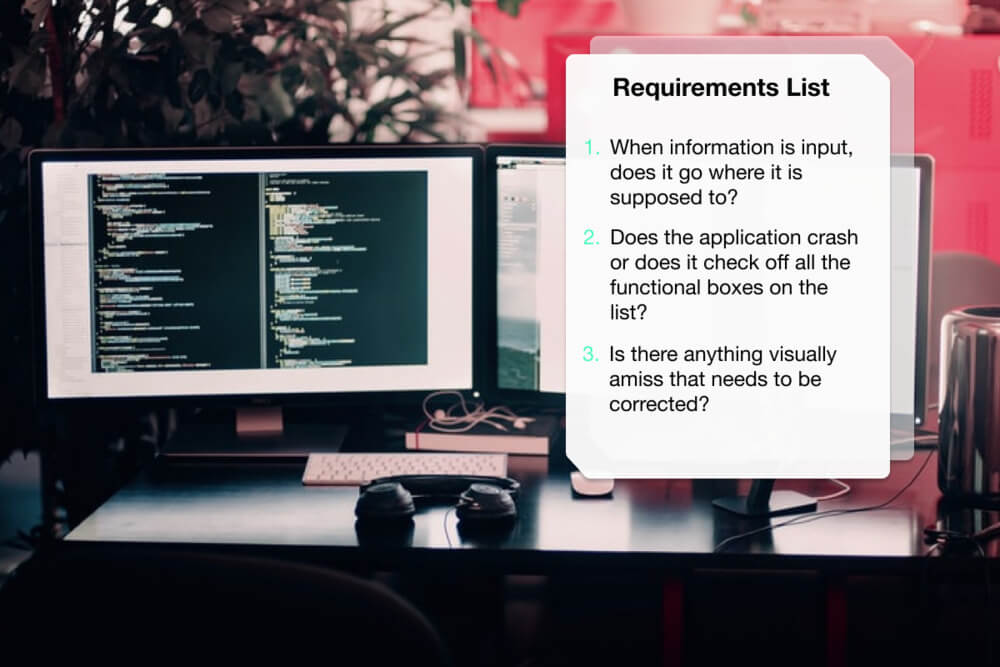

This is because most QC testers are testing for successful functionality per a requirements list. When information is input, does it go where it is supposed to? Does the application crash, or does it check off all the functional boxes on the list? Is there anything visually amiss that needs to be corrected? The QC process doesn’t necessarily ensure that the QC tester is actually looking at the lines of source code to see if the desired functionality was achieved in the most efficient, optimal, and secure manner.

Most bug detection and correction happen when someone observes something not working correctly or someone complains. And, candidly, since a lot of development shops don’t have adequate QC testing resources in place, and the primary developer is doing what little testing gets done, the people complaining usually ends up being the end users.

Regardless, no one is questioning the reality that sometimes speeds to market wins the argument over the achievement of perfection (or at least near perfection). This is also why software applications have “Versions.” It is assumed that version 2.0 will be better than version 1.0. And 3.0 will be better than 2.0, etc. However, the advance of major and minor releases, while fixing discovered deficiencies and adding new capabilities, may never undercover fundamental flaws in the code that were put there during that initial rushed development process.

Third-Party Code Review: How It Works

This is where the third-party code review comes in and is essential. As noted, programmers may have issues with being objective and unbiased about the quality of their code. They also may not be 100% knowledgeable about the latest-greatest methods and techniques for achieving specific programmatic results. Software development, like that all of the technology, is in a constant state of evolution and advancement. And that means that sometimes an independent pair of eyes taking a look at a requirement and a software solution for that requirement just might have some fresh ideas on how it could be done better. Or perhaps those independent eyes might spot inefficiencies or previously undiscovered errors that could be corrected.

Everyone has heard to old cliché:

If it ain’t broke, don’t fix it.

When it comes to software application development, that’s a dangerous mindset. Just because software “works” doesn’t mean it is working as well as it can. If a race car has a top speed of 180 mph, but an engineer can make tweaks to the engine to produce vastly more horsepower and get the top speed up to over 200 mph, that’s not to say that the car’s previous condition was “broke.” It just means that there was just an opportunity for improvement.

There’s always lots of opportunity for improvement in software. What would it mean for a company’s customers if their software application could run three times faster than it does today and use half as much memory? That could mean much greater customer satisfaction, and it could also be a new competitive advantage against the competition.

It’s common these days for software vendors to invest a lot of time and money in improving their User Interfaces (UI), e.g., upgrading old Visual Basic/Windows-type interfaces to slick, colorful web-based interfaces that are more intuitive and aesthetically pleasing. This isn’t a wasted effort; such upgrades are often sorely needed.

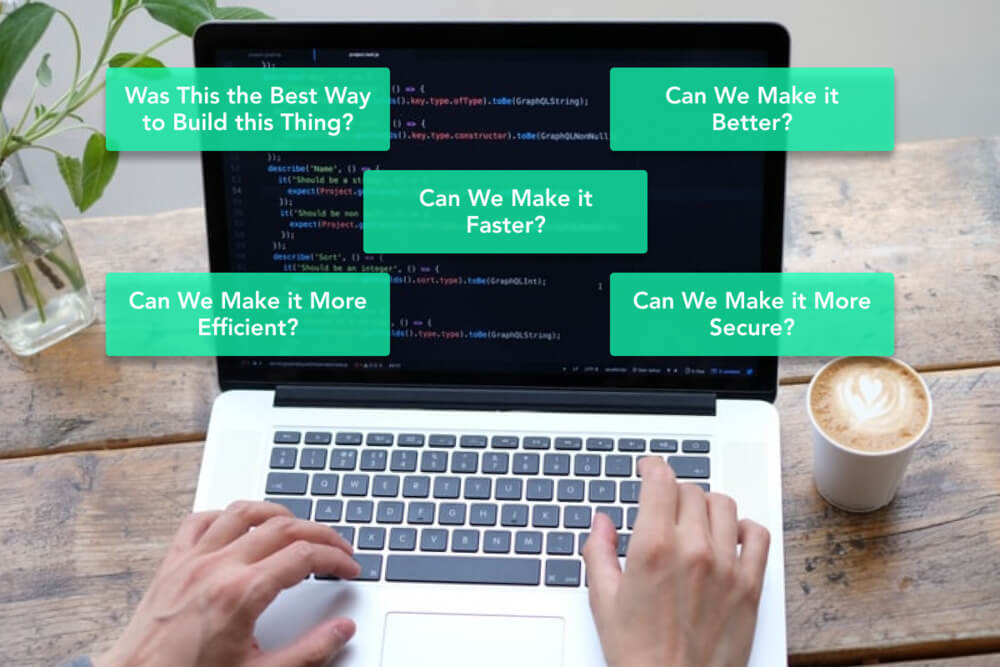

But how often do software vendors look under the hood at the core code base and ask: Was this the best way to build this thing? Can we make it better? Faster? More efficient? More secure? The answers to such questions are the heart of Continuous Improvement and Optimization.

Could optimized Open Source code modules be used instead of old spaghetti modules written five to ten years ago? Are there low-code tools that are available to replace outdated functions that are old and slow? Are there third-party SaaS services that could take over peripheral functions currently being done in-house with custom code that is difficult and costly to maintain?

If any of these questions strike a chord, then a third-party code review must be considered.

Third-party code reviews aren’t difficult to engage in, nor are they expensive. It’s simply a matter of engaging with an independent software development vendor who offers such services. They will act as an independent reviewer who will sit down with you and, through dialog, come to understand an application’s core functions and use cases. Then, under strict NDA, they will review your source code with an eye for finding and recommending improvements and/or remediation of any issues discovered.

The deliverable is a report.

With the findings report in hand, it is then up to the software development organization itself to decide what to do with the findings and recommendations. As is often the case, the findings subsequently report becomes an objective guide for making improvements to the overall software development roadmap. And the organization can take heart in the fact that the direction for continuous improvement of their system is not based on any subjective, anecdotal inputs or the squeakiest wheel complaints, but upon a documented, objective review.

Pro Tip: Documented analysis/review and recommendations by a third party can do wonders for helping to justify funding to get things done – as opposed to the internal wish list.

Conclusion

If you’ve never had a comprehensive software code review done on a system that’s been around for any significant length of time, then you need to look into commissioning one post haste. And then think seriously about having one done on a periodic basis, such as annually or at least every few years. Doing it in conjunction with ever major release version is also a very good idea.

And if you do, you’ll be glad you did – especially when you discover exactly how your system can run orders of magnitude faster, more efficiently, consume fewer system resources, be more secure against hacking risks, and in official standards compliance, become easier to maintain, more extensible, and ultimately foster more and more happy users. After all, isn’t that the ultimate objective of any world-class software development organization?