You never know whether the software you develop or use is of good enough quality until you test it. Thus, software testing is an integral part of any development process. The comprehensive result-oriented approach usually requires both manual and automated testing, as each of them contributes to the high quality of the respective software and can help ensure ROI and save a lot of time.

One of the Softengi customers requested our company to conduct thorough testing of one of its applications, and the Softengi QA Team took on this project.

The primary challenge was that the application was very large, rich in functionality, and consisted of many modules. Also, it was almost 10 years old and had never undergone automated testing. The task was to test it manually and using automated software testing tools.

The automated testing portion required much effort. Our testing engineers had to conduct smoke testing, which needed many autotests covering the basic functions of the application. Another issue was that the application was initially designed for Internet Explorer. However, it was also supposed to work in other browsers, i.e., Chrome, Firefox, and Opera. Automated cross-browser testing was thus indispensable.

Details of our Automated Testing Approach

The Project QA team performing the automated testing of the application included highly qualified test automation engineers. To increase the efficiency of their work, the team took the following test automation approach:

- Out of all possible test scenarios, it was necessary to choose the most important ones covering the basic application functionality and then write the respective autotests. So, based on the proposed criteria, a discussion was held that resulted in the selection of about 1000 test scenarios covered by autotests.

- A separate environment for test automation was set up.

- A flexible configuration of autotests was provided, which allowed these autotests to be used for several different versions of the application.

- During the active phase of running the autotests, five different browsers were used.

- The start trigger for autotests was customized (thus, there was an option to run autotests manually, at the end of every build development, or as per schedule).

- Autotests for the selected 1000 test scenarios ran in different browsers on several environments every night following the start trigger.

- Manual testers could separately restart any autotest suite that failed for any reason until the true cause of the failure was defined.

- Continuous integration was ensured by using TeamCity.

- Jira and SpiraTest test management systems were used to streamline the management of the test process.

- A version control system supporting multiple AUT versions was used.

- All in all, the QA team leveraged the following technologies: IBM Rational Functional Tester + Java; Telerik + C#; Jira + TeamCity; VMWare.

- The test result reports were customized (i.e., separate reports were prepared for the management team and technical teams).

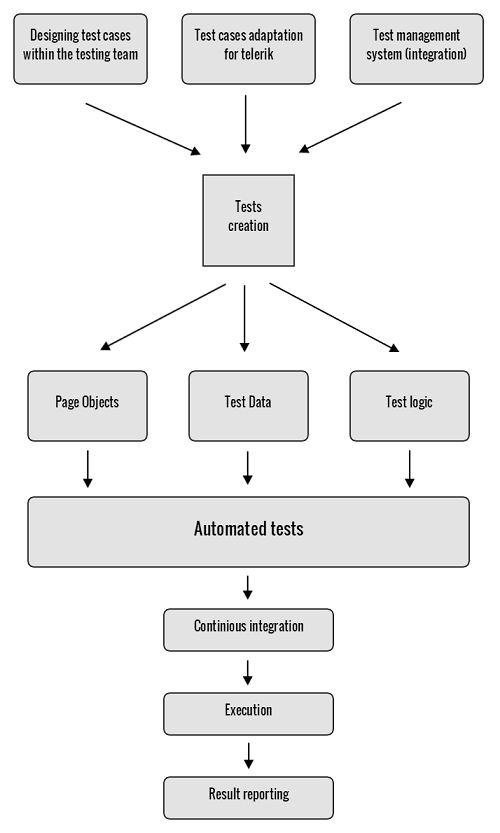

- The QA team followed a clearly defined automated testing procedure (see Figure 1).

Igor Sharinsky, Head of the Softengi QA Department: “Because of the approach taken, the automated testing conducted by our professional team was successful and helped save more than 150 hours per release. In general, the prepared autotests have already been used in a dozen of releases. These autotests were already run about 300 times and continue running every night.”

If you are interested in scheduling a meeting with Igor Sharinsky, Head of Softengi QA Department, to find out more about Softengi testing projects, or just have an initial consultation, please send an email to [email protected].